What was my cash balance on March 15? In dollars?

The naive answer to "what was my cash balance on March 15?" is one line of SQL:

SELECT SUM(...) FROM entries

WHERE account_id = $1 AND created_at <= '2026-03-15';

That works for one currency. In dollars breaks it in three different ways.

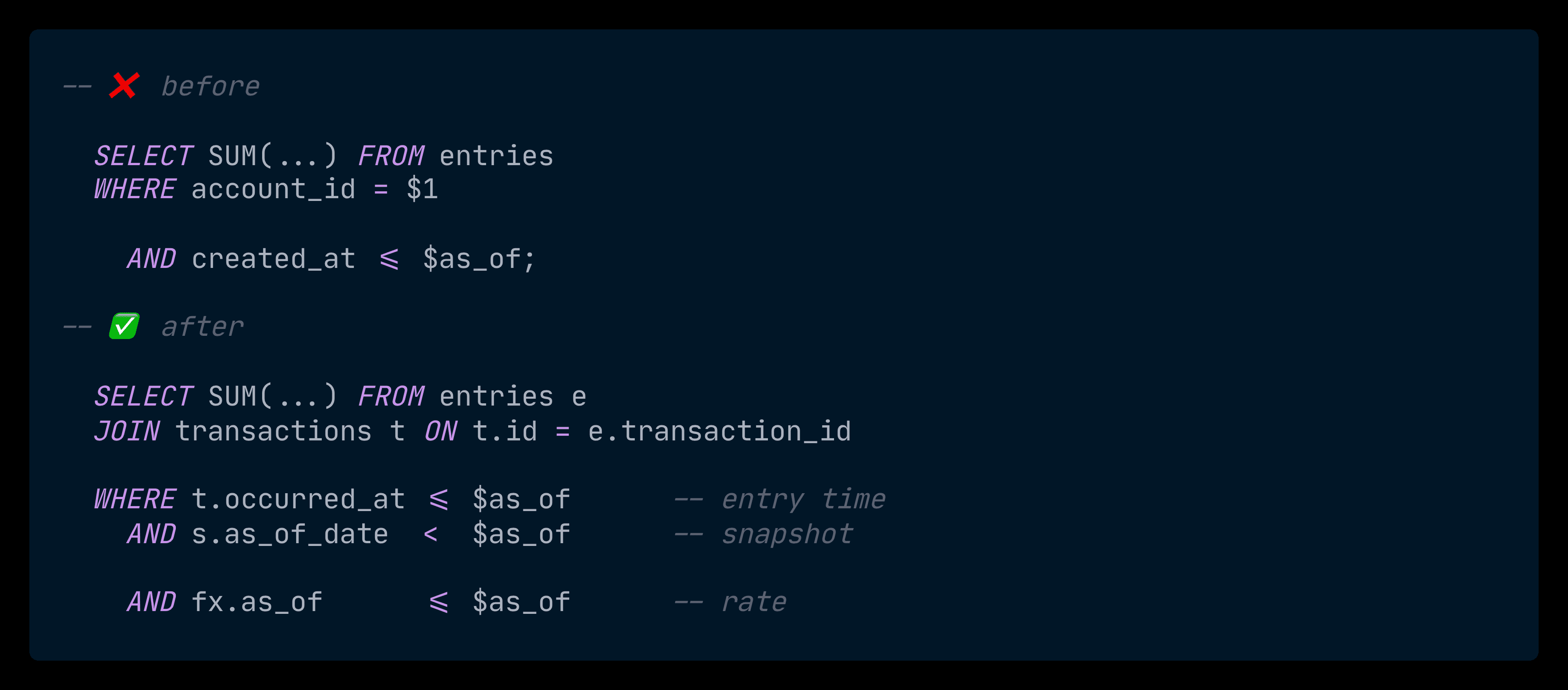

The query that looks right and isn't

Three things break, and none of them throw an error.

SELECT SUM(

CASE

WHEN direction = 'in' THEN amount

ELSE -amount

END

) AS balance

FROM entries

WHERE account_id = $1

AND posted_at <= $2; -- ✅ correct business timestamp

Wrong timestamp. created_at tells you when the row was inserted into Postgres, not when the business event happened. A booking that occurred in January but got backfilled today gets dated today. The answer to "as of March 15" silently includes events from years earlier, all stamped with this morning's timestamp.

The fix is to filter on transactions.occurred_at instead. (Why I keep both is a Week 1 decision — see DESIGN.md §9.)

No currency awareness. Entries are stored in their native currency, in integer minor units. Paise. Cents. Yen. Adding 1000 paise + 1000 cents produces a number, but it's not a currency. The query gives you a meaningless integer with full confidence.

Full scan, every query. Postgres reads every row in the account's history, sums them, returns. Every dashboard load. Every API call. Acceptable on a fresh database. Painful once the account has years of activity.

Three silent failures. The query runs and returns a wrong number.

Snapshots and spot rates

The fixes for failures one and two come from patterns that production ledgers settle into for the same reasons.

Snapshots. Recomputing an account's balance from scratch on every query is wasteful past a certain size. The standard pattern is to keep precomputed balances at known points in time — call them snapshots — and roll forward from the most recent one. End-of-day balance for March 14, plus everything that happened on March 15, is the balance as of March 15. The aggregation work has already been done; the query just replays the delta.

Spot rates. FX rates move, so conversion has a subtle trap: if you always convert at "today's rate," the same historical transaction returns a different answer every day. The fix is to record FX rates as time-stamped observations and convert using the rate that was current at the query's as_of — never today's rate when the question is about the past.

Both ideas reduce to the same engineering instruction: store timestamps, replay deltas.

What landed this week

Two new tables, three new helpers, one endpoint.

account_snapshots— per-account, per-currency, per-date pre-computed balance.fx_rates— point-in-time rate observations.GetBalance(orgID, code, asOf)— balance at a moment, via snapshot + delta replay.LookupRate(from, to, asOf)— most recent rate observation at or beforeasOf.ConvertBalance(b, target)— converts a balance into a target currency at the balance's own point in time.GET /accounts/:code/balance?as_of=...&in=...— the public endpoint.

A real call against the seed dataset (the demo booking sits at occurred_at = 2026-04-21):

curl 'localhost:8080/accounts/guest_payments/balance?as_of=2026-04-30T00:00:00Z&in=USD'

# {"account":"guest_payments","currency":"USD","balance":11891,"as_of":"2026-04-30T00:00:00Z"}

₹10,000 of guest payments → $118.91, valued at the FX rate that was current on April 15 (the most recent rate observation before the query date).

The whole architecture has one rule: every timestamp in this query flows from the same as_of. The entries filter, the snapshot lookup, the FX rate selection — all three line up against the same value. Get one of them out of sync and you get an answer that looks plausible and isn't.

Three clocks, one query

To answer "what was the cash balance on March 15, in dollars," three timestamps inside the database have to agree.

Clock one: when the entry happened. Each entry belongs to a transaction, and the transaction has an occurred_at — the timestamp of the business event. That's the column the query filters on, not created_at. A booking from January, backfilled today, is dated January.

Clock two: which snapshot to start from. I don't sum from time zero. The query finds the most recent account_snapshots row whose as_of_date is strictly before March 15, then replays only the entries between that date and March 15.

-- nearest snapshot strictly before our target date

SELECT balance, (as_of_date + INTERVAL '1 day')::timestamptz

FROM account_snapshots

WHERE account_id = \(1 AND as_of_date < \)2::date

ORDER BY as_of_date DESC LIMIT 1;

-- replay only the entries since that snapshot

SELECT COALESCE(SUM(CASE WHEN e.direction='in' THEN e.amount ELSE -e.amount END), 0)

FROM entries e

JOIN transactions t ON t.id = e.transaction_id

WHERE e.account_id = $1

AND t.occurred_at >= $2 -- snapshot end

AND t.occurred_at <= $3; -- the as_of we were asked about

If there's no snapshot, the lower bound falls back to time zero. Slow, but always correct. A make snapshot target is on this week's todo — once it's wired up, a scheduled job will seed snapshots for recent dates so the delta stays small.

Clock three: which FX rate was current. It's the latest observation in fx_rates whose as_of is at or before March 15. LookupRate returns ErrNoRate if no rate has been seen yet — better to fail loudly than to invent a rate.

That gets us a number. Multiplying it by the balance is where I shipped a bug.

The first version of Convert parsed the NUMERIC(20,10) rate as a fixed-point integer and multiplied. It passed every test I'd written. It also rounded negative amounts the wrong way — toward zero instead of away from it. With rate 0.01195, converting +10,000 paise returned +120 cents. The same conversion of -10,000 returned -119, not -120. Liability and revenue accounts carry negative balances by convention, so any balance query in a foreign currency would silently round the wrong way for those accounts.

The rewrite uses math/big.Rat — so neither integer overflow nor floating-point drift is possible — and rounds half-away-from-zero, so +X and -X round to matching magnitudes.

func (fx *FXRate) Convert(amount int64) (int64, error) {

rate, ok := new(big.Rat).SetString(fx.Rate)

if !ok {

return 0, fmt.Errorf("ledger: invalid rate %q", fx.Rate)

}

product := new(big.Rat).Mul(rate, new(big.Rat).SetInt64(amount))

// half-away-from-zero rounding

half := new(big.Rat).SetFrac64(1, 2)

if product.Sign() < 0 {

product.Sub(product, half)

} else {

product.Add(product, half)

}

result := new(big.Int).Quo(product.Num(), product.Denom())

if !result.IsInt64() {

return 0, fmt.Errorf("ledger: conversion overflow")

}

return result.Int64(), nil

}

The tests that lock it in

Two tests carry the weight.

The first proves the snapshot pattern is safe. With and without a snapshot present, the query has to return the same number — otherwise the optimization is just a fast way to lie. The test wipes any existing snapshots, captures the naive balance for every demo account, computes a snapshot, and re-runs the query.

// before — sums every entry from time zero

naive, _ := GetBalance(ctx, pool, orgID, "cash", nil)

ComputeSnapshots(ctx, pool, orgID, snapDate)

// after — uses snapshot + delta replay

fast, _ := GetBalance(ctx, pool, orgID, "cash", nil)

if fast.Amount != naive.Amount {

t.Fatalf("accelerated=%d, naive=%d (must match)",

fast.Amount, naive.Amount)

}

Different paths, same answer. If a future change to the SQL or the snapshot schema breaks this invariant, the test fails on the next CI run.

The second test is one assertion that locks in the rounding fix:

{

name: "negative amount mirrors positive",

rate: "84.1000000000",

amount: -10000,

expected: -841000,

},

-10000 paise × 84.10 = -841000 paise. Symmetric to the positive case. Without big.Rat, the assertion fails — this test was the first thing to catch the bug.

Why does this matter? Today, the impact is small: every entry stays balanced in its native currency, so the bug only shows up as a one-cent error on negative-balance accounts when someone asks for them in a foreign currency. Trivial in isolation. But ledger systems grow — per-entry conversions, pre-computed converted snapshots, foreign-currency reports — and asymmetric rounding turns into drift scattered across operations, none of them individually wrong.

Next Sunday

Week 3 is the React side — a live T-account visualizer. Pick a transaction, see all four affected accounts update on screen as the entries land. The ledger has been API-only so far; next Sunday it gets a UI.

I'm posting one of these every Sunday until the 6 weeks are up. Subscribe if you want the next one.